Adobe character animator training3/28/2023

These new capabilities make After Effects use for the application of digital makeup both easier and faster.

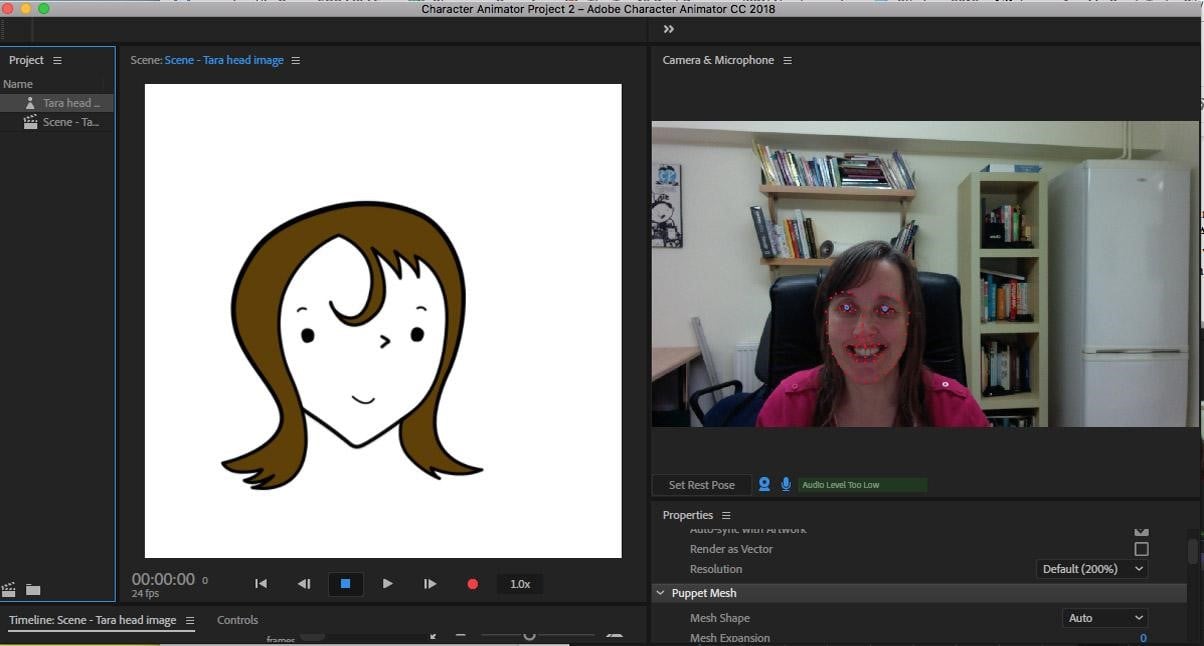

The next version of After Effects will track faces in camera shots and can be used to create masks for isolating faces or generating control points for effects around all facial features.īecause control points for effects can be easily added to facial features, it is now easier to apply effects and layers to individual parts of the face with great precision. The face tracking technology used by Adobe Character Animator has also been integrated directly into After Effects as well. It is installed as part of After Effects and it can also be accessed from within After Effects. Subscribers to the Creative Cloud will have access to Adobe Character Animator along with the existing applications that are part of the subscriptions. It uses the web camera and microphone from a computer to mimic facial expressions and coordinate mouth movements on the animation to those by the user in front of the camera and on the microphone. The difference is the process by which Adobe Character Animator creates animations from these images. As with these other tools, artwork and images from Illustrator and Photoshop can be imported and animated. Adobe Character Animator is different from these existing tools. The new product is Adobe Character Animator, and will be available as part of the Adobe Creative Cloud.Īdobe has a history of providing animation tools, with existing animation tools such as Flash, After Effects, and Edge Animate. Graphic Design for High School StudentsĪdobe has announced a new app to create animations, which is available as preview version.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed